AI agents are writing code now. That part is settled. The question security teams should be asking is: how do you make those agents write secure code from the start, rather than trying to catch problems after the fact?

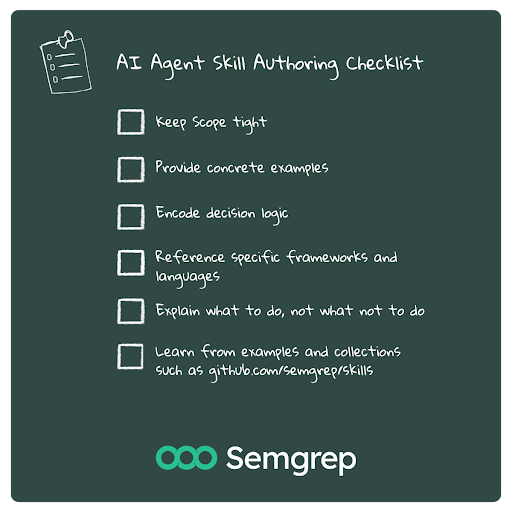

The answer lives in something referred to as "skills." Skills provide structured prompts and rules to shape how an agent behaves when it encounters specific scenarios. Think of them as requirements translated into a language that an LLM can follow. Semgrep has been building these for its own platform, but the principles behind writing good security skills apply broadly to anyone working with AI coding agents.

In this post, we'll break down what makes a security skill effective, what makes one useless, and how to think about the problem if you're building or evaluating agent-based development tools. By the end, you'll be creating agent skills that level up your code security.

What's an Agent Skill?

An Agent Skill is a set of instructions that tells an AI agent how to handle a specific class of problem. In the context of security, that means things like: how to handle user input sanitization, how to manage secrets, how to configure authentication flows, or how to avoid common vulnerability patterns in a given language or framework.

Skills can bridge between two ideas. On one end, you have static analysis rules (like Semgrep rules) that scan code after it's written and flag problems. Alternatively, you have coding standards and documents that developers are supposed to read and internalize. Skills occupy the middle ground. They're machine-readable enough for an agent to act on in real time, but expressive enough to capture the nuance of real security decisions.

A good skill doesn't just say "don't use eval()"; it provides context: explains when eval is dangerous, offers alternatives, shares how to handle edge cases where dynamic code execution is needed, and provides examples of what the acceptable patterns look like in the specific framework being used.

Why Traditional Prompting Falls Apart for Security

Most builders' first instinct when trying to make an AI agent security-aware is to add a line to the system prompt like "always write secure code," “act like a security engineer,” or "follow OWASP best practices." This does almost nothing, beyond eating up a few extra tokens.

General instructions like these fail for the same reason that telling a junior developer to "write secure code" doesn't work. The instructions are too abstract. The agent doesn't know what "secure" means in the specific context of the code it's generating.

Security is very contextual. A function that's perfectly safe in one context is a critical vulnerability in another. Broad prompting can't capture that, and agents that receive only broad guidance tend to do one of two things: they either ignore the guidance entirely because it's too vague to act on, or they become overly conservative, sometimes even refusing to generate code that it deems as potentially dangerous - even when it's not.

Neither outcome is useful.

How to Write Skills That Work

Most skills fail for the same reason: they're written like documentation rather than decision support. After building and testing hundreds of them, we've identified four structural qualities that consistently separate skills that agents apply reliably from ones they ignore or misapply.

First, they're scoped tightly. A skill that tries to cover "web application security" is going to be too broad to be useful. A skill that covers "SQL query construction in Python using SQLAlchemy" is specific enough that the agent can apply it consistently. The narrower the scope, the more reliable the behavior.

Second, they include concrete examples of both correct and incorrect patterns. Agents learn far more from seeing a vulnerable code snippet next to its fixed version than from reading a paragraph about why parameterized queries matter. This will also use fewer tokens, which is important when dealing with large code bases. The examples need to be realistic, too. Toy examples with obvious flaws don't generalize well. The agent needs to see patterns that look like real production code.

Third, they encode decision logic, not just rules. Security often involves tradeoffs. A good skill helps the agent reason about those tradeoffs rather than just applying blanket restrictions. For example, a skill about file upload handling might include guidance on when to use allowlists versus blocklists for file extensions, depending on the application's requirements. That kind of conditional reasoning is what separates a useful skill from a prompted checklist.

Fourth, they reference the specific frameworks and libraries in play. An agent that knows you're using Express.js can apply middleware-specific security patterns. An agent that only knows you're "building a web server" can't. Framework awareness is very important.

Where Most Security Skills Go Wrong

The most common failure mode is writing skills that are too prescriptive without being contextual. These read like compliance checklists: "All inputs must be validated. All outputs must be encoded. All database queries must be parameterized." These statements are true, but they don't help an agent make decisions in ambiguous situations.

Another common problem is writing skills that focus exclusively on what not to do. Agents respond much better to positive instruction. Tell them what pattern to use, not just what to avoid. "Always use prepared statements with bound parameters" is more actionable than "never concatenate user input into SQL strings." Both convey the same idea, but the first one gives the agent something to do, while the second one just takes one of many insecure options away,. Think of it like allow-listing vs. deny-listing. Allow-listing is the better option in most security-related scenarios because it removes all options other than the safe one.

Skills also fail when they don't account for the agent's tendency to be helpful above all else. If a developer asks the agent to do something insecure, a poorly written skill might not give the agent enough grounding to push back. The skill needs to include explicit guidance on how to handle requests that conflict with security requirements. Should the agent refuse? Should it offer an alternative? Should it implement the request but add a warning comment? These decisions should be clearly outlined in the skill, and if the otherwise-helpful agent is given permission to push back, it will.

The last major failure mode is skills that aren't testable. If you can't write a test case that verifies whether an agent is following a skill correctly, the skill is probably too vague. Every skill should have a set of scenarios where you can evaluate the agent's output and determine whether the skill was applied. This is where tools like Semgrep's own rule engine become valuable: you can use static analysis to verify that the agent's output conforms to the security patterns you specified in the skill.

For example, write a tool that says "always use prepared statements with bound parameters" and then explicitly ask the agent to concatenate user input to a SQL statement to check that it refuses to write the insecure code. If it doesn't refuse, some changes are needed.

The Vulnerability Detection Problem

There's a related challenge that's becoming more relevant as AI-generated code makes up a larger share of production codebases: detection. How can we tell which code is AI generated, and which is not - and does it even matter, from a security perspective?

It matters because AI-generated code has different risk characteristics than human-written code. Agents tend to produce code that's syntactically correct and functionally reasonable but occasionally misses security nuances that an experienced expert would catch instinctively. The vulnerabilities in AI-generated code aren't usually the obvious ones. They're subtle issues like improper error handling that leaks stack traces, race conditions in authentication checks, or TOCTOU (time-of-check-to-time-of-use) bugs in file operations.

Knowing which parts of your codebase were generated by AI helps you prioritize your review efforts. If you can tag AI-generated code, you can run more aggressive static analysis on those sections, apply stricter review requirements, or use tools that are specifically tuned for the kinds of mistakes agents tend to make.

This is where security skills and AI detection become complementary. Skills reduce the number of security issues in AI-generated code upfront. Detection lets you identify AI-generated code that made it into production without adequate skill coverage. Together, they form a feedback loop: detection tells you where your skills are failing, and improved skills reduce the volume of issues detection needs to catch.

Building Skills for Your Own Stack

Take a practical approach to building security skills for your organization. Your first ten skills should match your top ten most frequent findings.If you've been running Semgrep or another static analysis tool, you already have this data.

For each of those findings, write a skill that includes four things: a description of the vulnerability class in plain language, examples of vulnerable code from your actual codebase (anonymized if needed), the corresponding secure implementation, and a brief explanation of why the secure version works. Keep the language direct and specific. Avoid jargon that the agent might misinterpret. A great resource for this type of language are the cheat sheets in the Semgrep docs.

Then test each skill by running a set of prompts through your agent and evaluating the output. Ask the agent to build a feature that would naturally involve the vulnerability class you're targeting. Does the agent produce secure code? Does it produce insecure code and then catch it? Does it produce insecure code and miss it entirely? The answers tell you how to refine the skill.

The Skill Change Management Layer

Security is an organizational problem. For a larger team, creating and refining these skills could be a full-time job in itself. Someone needs to own the skill set. Skills need to be maintained as frameworks change, as new vulnerability classes emerge, and as your threat model evolves. Treating them as static is a recipe for gradual irrelevance.

You also need a process for updating skills based on real-world outcomes. When a security issue makes it into production, trace it back:

Was there a skill that should have caught it?

If so, why didn't it work?

Was the skill too vague?

Did the agent misinterpret it?

Was the scenario not covered?

This feedback loop is how you get skills that improve over time.

Semgrep open sourced a collection of skills that you can use to get started with as a result of our collective learning.

Here's a snippet from code-security/rules/path-traversal.md to give you an idea of what it looks like when providing a description and examples from a codebase with what a secure implementation looks like:

.jpg)