AI can find code security vulnerabilities that traditional tooling can't. Business logic flaws, IDORs, broken access control. These are vulnerability classes that have historically required a human reviewer to identify. Semgrep has been evaluating AI's performance on these kinds of problems, and early results show that AI can catch what pattern matching misses. For many organizations, these are also the vulnerability classes that command significant bug bounty payouts.

The capability is real. But putting AI into production for code security introduces operational challenges that are worth understanding:

Variable costs. Token spend is hard to predict, hard to budget for, and has the potential to grow.

Inconsistent output. Outputs vary between runs. You can't reproduce results, which breaks trust, compliance, and review workflows.

No auditability. Information goes in, a result comes out, and you can't trace the reasoning path.

Hallucinations. False positives from AI erode the developer trust that security teams depend on.

Scale. What works in a proof of concept doesn't automatically work across your full repository fleet. Running AI org-wide means solving for latency, orchestration, observability, and cost simultaneously. On large repos, sequential execution can't keep pace with PR volume, and most teams don't have the infrastructure to parallelize it.

We've heard these concerns consistently from teams exploring AI for AppSec and the pressure to solve them is growing. Developers using AI coding assistants are shipping more code and more PRs, and vulnerability volume is growing with it. Manual review alone can't keep up. The question isn't whether to bring AI into your security program. It's how to do it in a way that's accountable, cost-efficient, and reproducible.

The tradeoff between tools and AI

Most AppSec teams today rely on deterministic tools. As they evaluate how to bring AI into their programs, they're facing a tradeoff.

Deterministic tools like Semgrep's analysis engine are fast, consistent, and cheap to run. But they have a capability ceiling. They operate on syntax and data flow, not semantics. They can't reason about business logic, evaluate authorization models, or assess whether a finding is actually exploitable in context.

AI has shown it can handle these kinds of tasks. But it's expensive, inconsistent, and hard to audit.

Teams that try to combine the two on their own end up wrapping APIs that weren't designed for security automation, building custom orchestration layers, and maintaining infrastructure they didn't plan for. That approach doesn't scale to hundreds of repositories and thousands of developers.

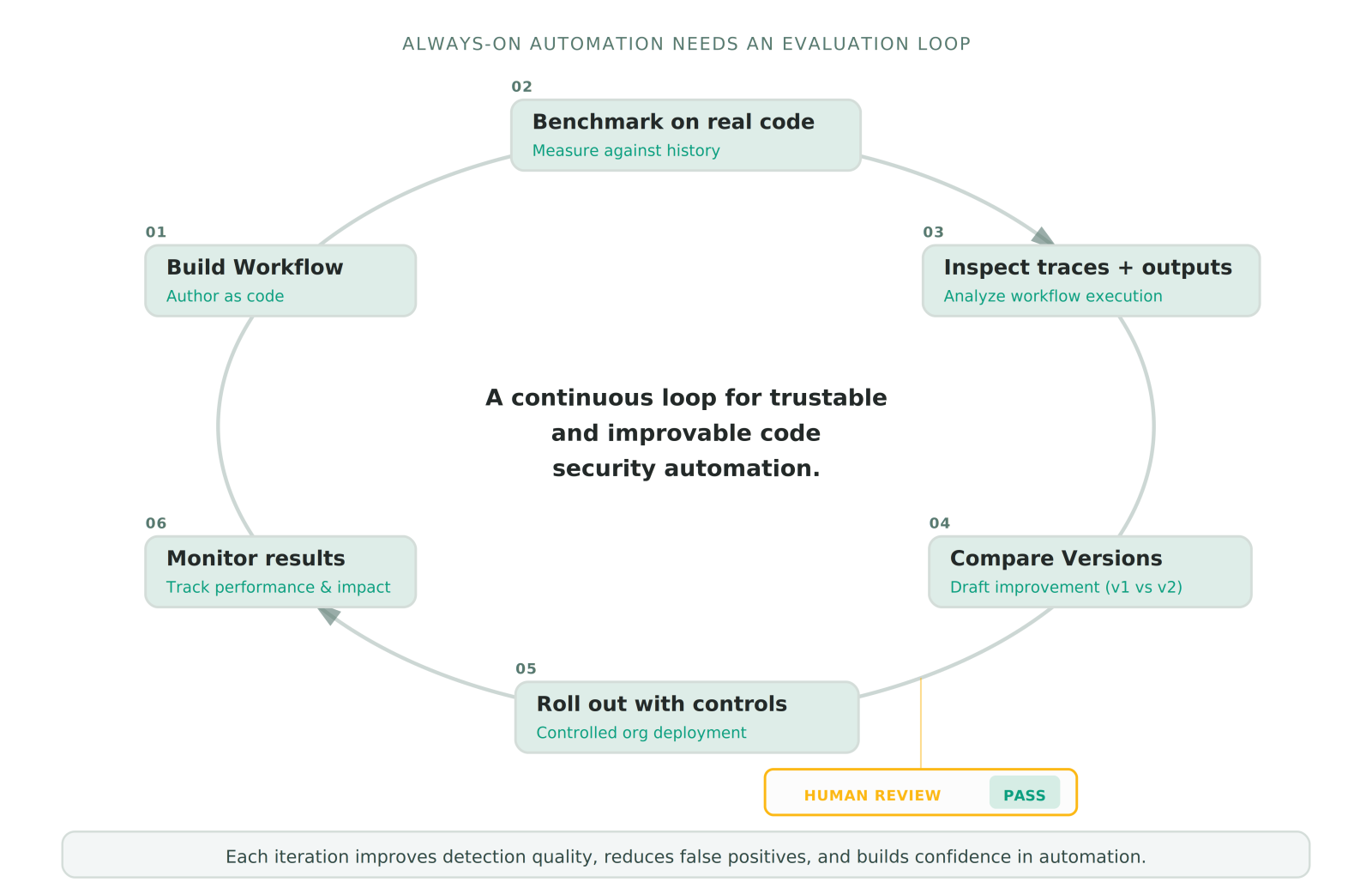

Semgrep Workflows solves this by giving teams a programmable platform to combine deterministic analysis and AI into pipelines that are testable, auditable, and cost-controlled, with managed infrastructure that scales across your full repository fleet.

From custom rules to custom workflows

Semgrep was built on a core belief: no vendor can foresee every company's code security needs. Customization is essential, and it must be simple yet powerful. Semgrep Custom Rules brought that philosophy to vulnerability detection. Today, Semgrep infrastructure processes millions of code scans a week, with thousands of teams using custom rules to encode exactly what vulnerabilities and anti-patterns uniquely matter in their codebase.

Custom Workflows extends this philosophy across the entire code security loop: what gets detected, how findings are triaged, how they're validated, and how they get resolved. Each workflow is a multi-step pipeline where deterministic tools handle code scanning, policy checks, and validation, while AI steps handle tasks that require reasoning over code context: classifying whether a finding is exploitable, synthesizing evidence across files, or even generating a fix.

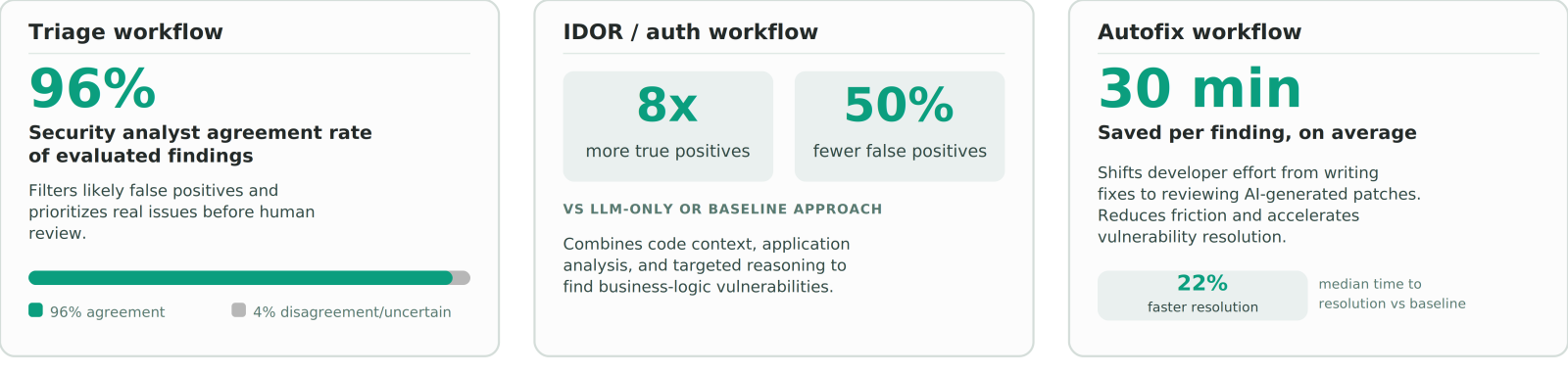

Semgrep's own Multimodal detection is a Workflow built on this platform. It uses the Pro Engine's taint analysis to trace where user input flows into sensitive operations like database queries or API responses, then passes that analysis to an LLM that reasons about whether authorization checks are missing along those paths. That combination finds business-logic vulnerabilities like IDORs and broken access control that neither static analysis nor LLMs catch reliably on their own. Our research found that Semgrep’s Workflow-based approach to IDOR detection produced 8× more true positives and 50% fewer false positives than an LLM-only baseline, where 88% of findings were false positives.

The same platform that powers Multimodal detection is now available in Private Beta for teams to build their own Custom Workflows.

How it works

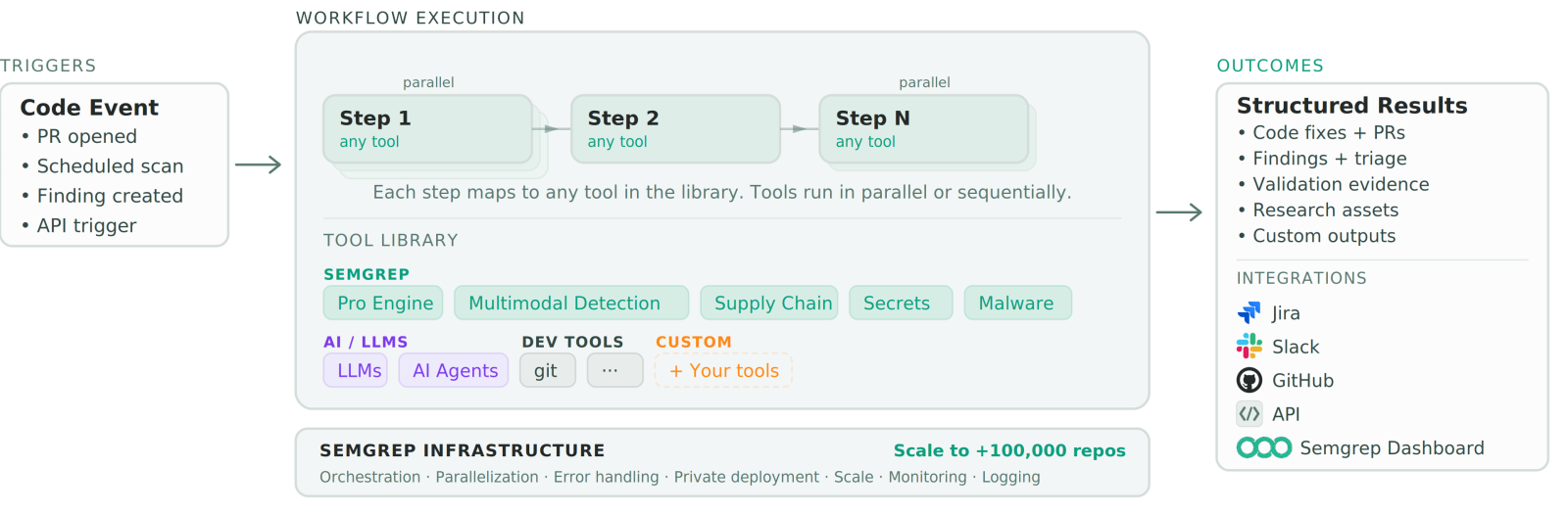

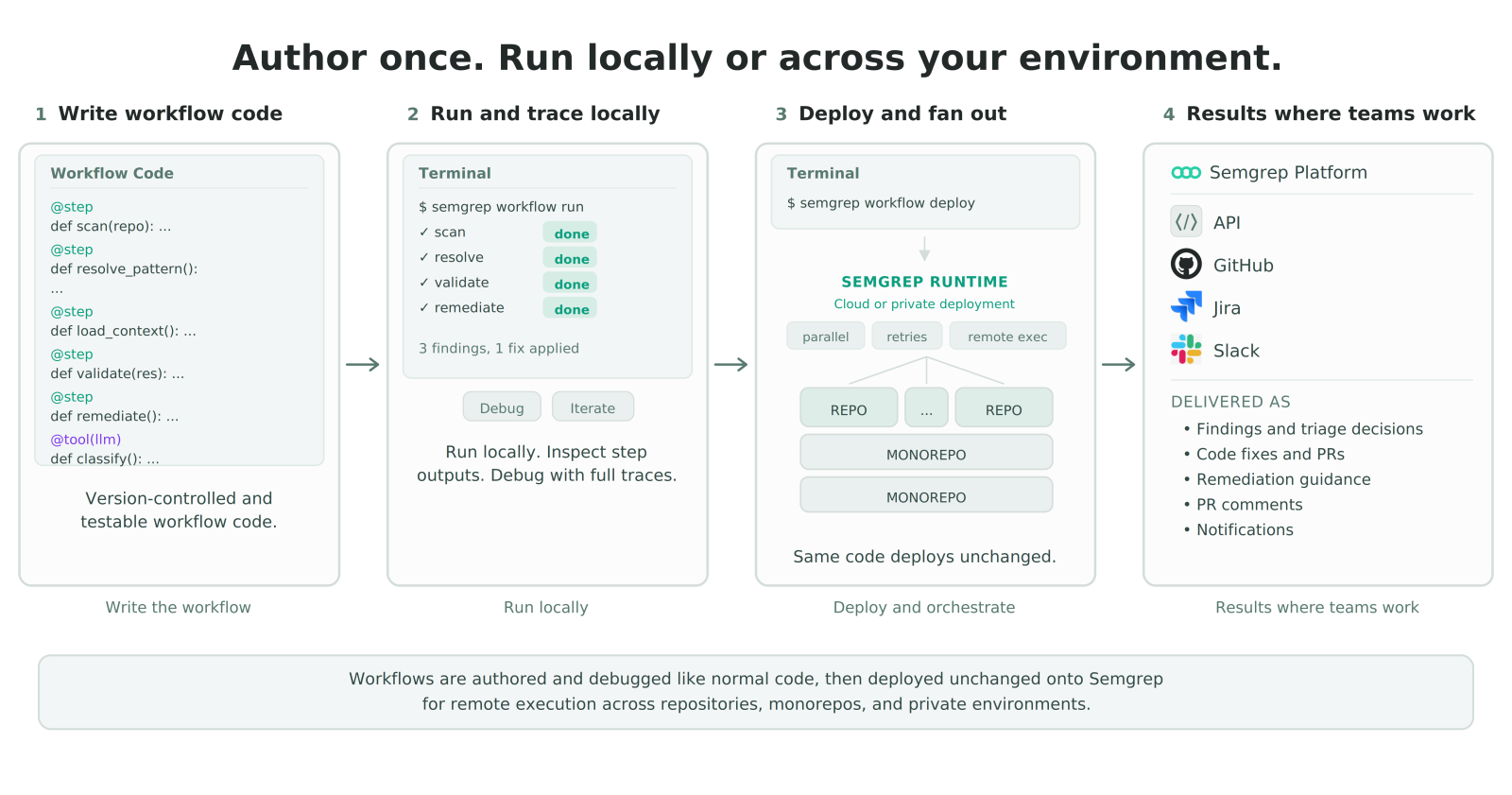

Workflows are defined in code. It's a Python SDK that gives workflow writers your normal development environment, and your favorite coding LLMs.Think of it as programmable CI for security: instead of stitching together scripts and API calls, you define typed, testable pipeline steps in a real development environment. At a high level, each workflow defines:

Triggers. What starts the workflow: PR events, scheduled scans, webhooks, or API calls.

Steps. Methods with typed inputs and outputs that run in parallel or sequentially. Each step maps to any tool in the library: Semgrep's analysis engines, LLMs, dev tools like git, or even your own custom tools.

Outcomes. Structured results delivered into the systems your team already uses: Jira, Slack, GitHub, or the Semgrep dashboard.

.jpg)